阅读更多

1 卷积

全连接NN:每个神经元与前后相邻层的每一个神经元都有连接关系,输入是特征,输出为预测的结果

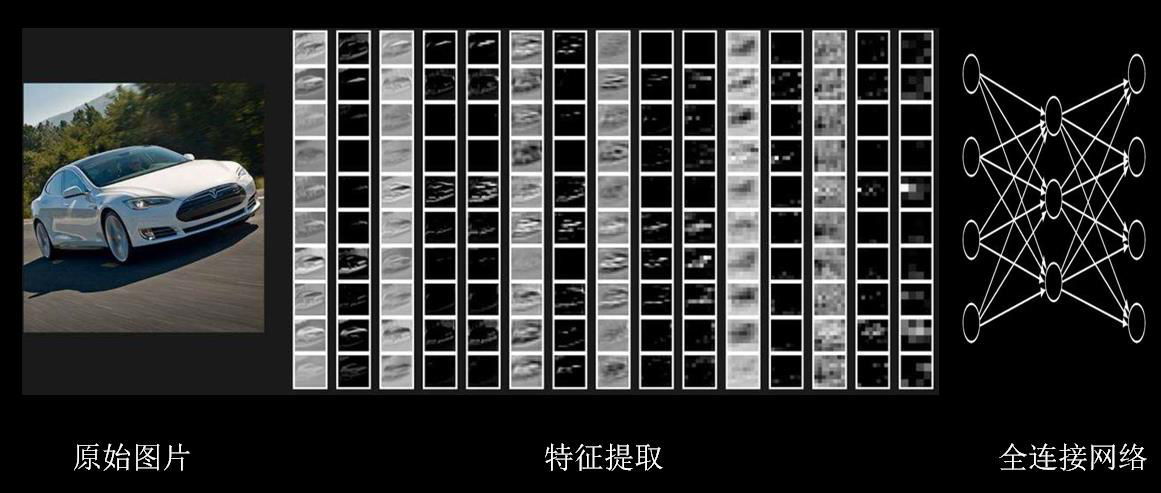

待优化的参数过多,容易导致模型过拟合。为避免这种现象,实际应用中一般不会将原始图片直接喂入全连接网络

在实际应用中,会先对原始图像进行特征提取,把提取到的特征喂给全连接网络,再让全连接网络计算出分类评估值

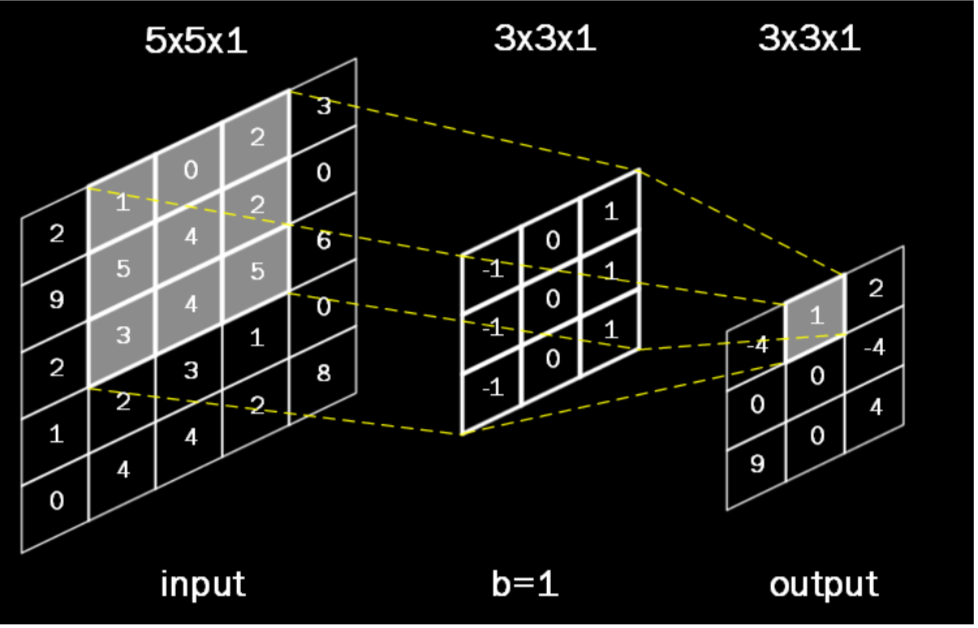

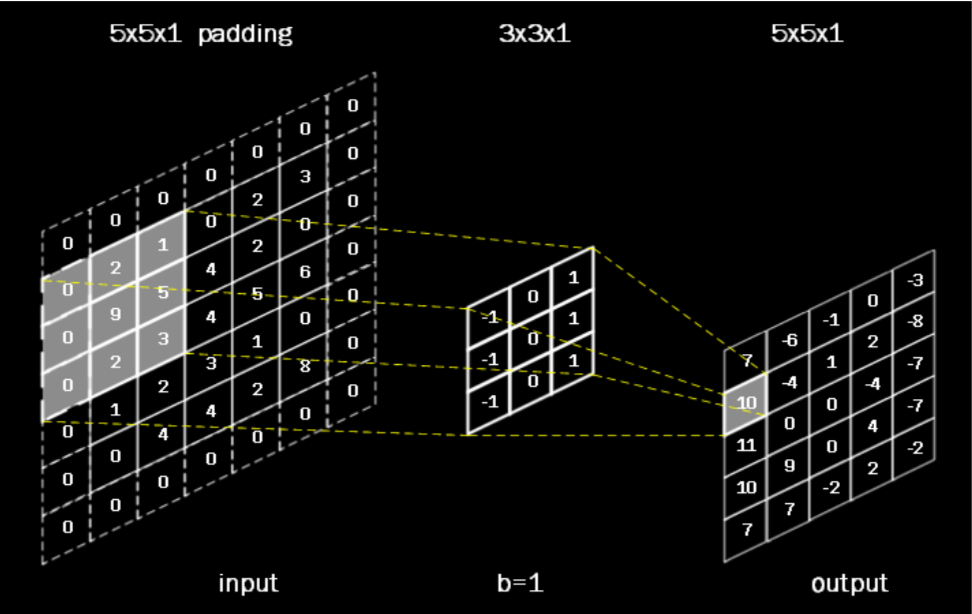

卷积是一种有效提取图片特征的方法。一般用一个正方形卷积核,遍历图片上的每一个像素点。图片与卷积核重合区域内相对应的每一个像素值乘卷积核内相对应点的权重,然后求和,再加上偏置后,最后得到输出图片中的一个像素值

有时会在输入图片周围进行全零填充,这样可以保证输出图片的尺寸和输入图片一致

在TensorFlow中,计算卷积的方式如下

1 | tf.nn.conv2d( |

参数解释

- 输入描述:用

batch给出一次喂入多少张图片,每张图片的分辨率大小,比如5行5列,以及这些图片包含几个通道的信息,如果是灰度图则为单通道,参数写1,如果是彩色图则为红绿蓝三通道,参数写3 - 卷积核描述:要给出卷积核的行分辨率和列分辨率、通道数以及用 了几个卷积核。比如上图描述,表示卷积核行列分辨率分别为

3行和3列,且是1通道的,一共有16个这样的卷积核,卷积核的通道数是由输入图片的通道数决定的,卷积核的通道数等于输入图片的通道数,所以卷积核的通道数也是1。一共有16个这样的卷积核,说明卷积操作后输出图片的深度是16,也就是输出为16通道 - 核滑动步长:上图第二个参数表示横向滑动步长,第三个参数表示纵向滑动步长,这句表示横向纵向都以

1为步长。第一个1和最后一个1这里固定的 - 是否使用padding:'SAME’表示填充,'VALID’表示不填充

2 池化

TensorFlow给出了计算池化的函数。最大池化用tf.nn.max_pool函数,平均池化用tf.nn.avg_pool函数

1 | pool = tf.nn.max_pool( |

参数解释

- 输入描述:给出一次输入

batch张图片、行列分辨率、输入通道的个数 - 池化核描述:只描述行分辨率和列分辨率,第一个和最后一个参数固定是

1 - 对池化核滑动步长的描述:只描述横向滑动步长和纵向滑动步长,第一个和最后一个参数固定是

1 - 是否使用padding:

padding可以是使用零填充SAME或者不使用零填充VALID

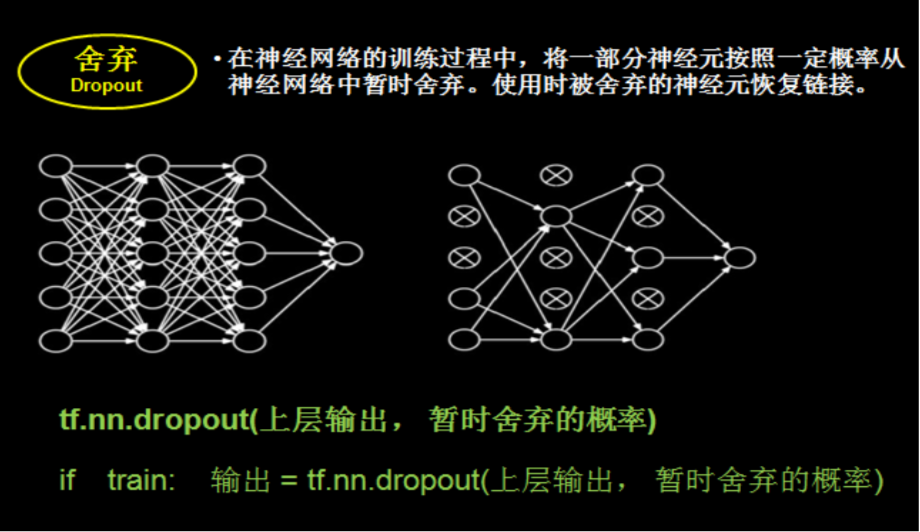

3 舍弃

在神经网络训练过程中,为了减少过多参数常使用dropout的方法,将一部分神经元按照一定概率从神经网络中舍弃。这种舍弃是临时性的,仅在训练时舍弃一些神经元;在使用神经网络时,会把所有的神经元恢复到神经网络中。Dropout可以有效减少过拟合

在实际应用中,常常在前向传播构建神经网络时使用dropout来减小过拟合加快模型的训练速度

dropout一般会放到全连接网络中。如果在训练参数的过程中,输出 =tf.nn.dropout(上层输出,暂时舍弃神经元的概率),这样就有指定概率的神经元被随机置零,置零的神经元不参加当前轮的参数优化

3.1 卷积

卷积神经网络可以认为由两部分组成,一部分是对输入图片进行特征提取,另一部分就是全连接网络,只不过喂入全连接网络的不再是原始图片,而是经过若干次卷积、激活和池化后的特征信息

卷积神经网络从诞生到现在,已经出现了许多经典网络结构,比如 Lenet-5、Alenet、VGGNet、GoogleNet和ResNet等。每一种网络结构都是以卷积、激活、池化、全连接这四种操作为基础进行扩展

Lenet-5是最早出现的卷积神经网络,由Lecun团队首先提出,Lenet-5有效解决了手写数字的识别问题

4 代码

4.1 mnist_lenet5_forward.py

1 | import tensorflow as tf |

4.2 mnist_lenet5_backward.py

1 | import tensorflow as tf |

4.3 mnist_lenet5_test.py

1 | import time |

4.4 mnist_lenet5_app.py

在相对路径下创建文件夹image,放入名字为0.png、1.png、…9.png的十张手写数字图片

1 | import tensorflow as tf |